Follow this article when you want to parse the Parquet files or write the data into Parquet format.

This results in aggregation queries being far less resource- and time-consuming compared to running the same query in a row-based data. Additional benefits include the following: With columnar storage, queries can skip irrelevant data quickly. This section describes how to use PXF to access Parquet-format data in an object store, including how to create and query an external table that references a Parquet file in the store.Parquet is designed to improve efficiency, performing much better than row-based files, such as CSV.

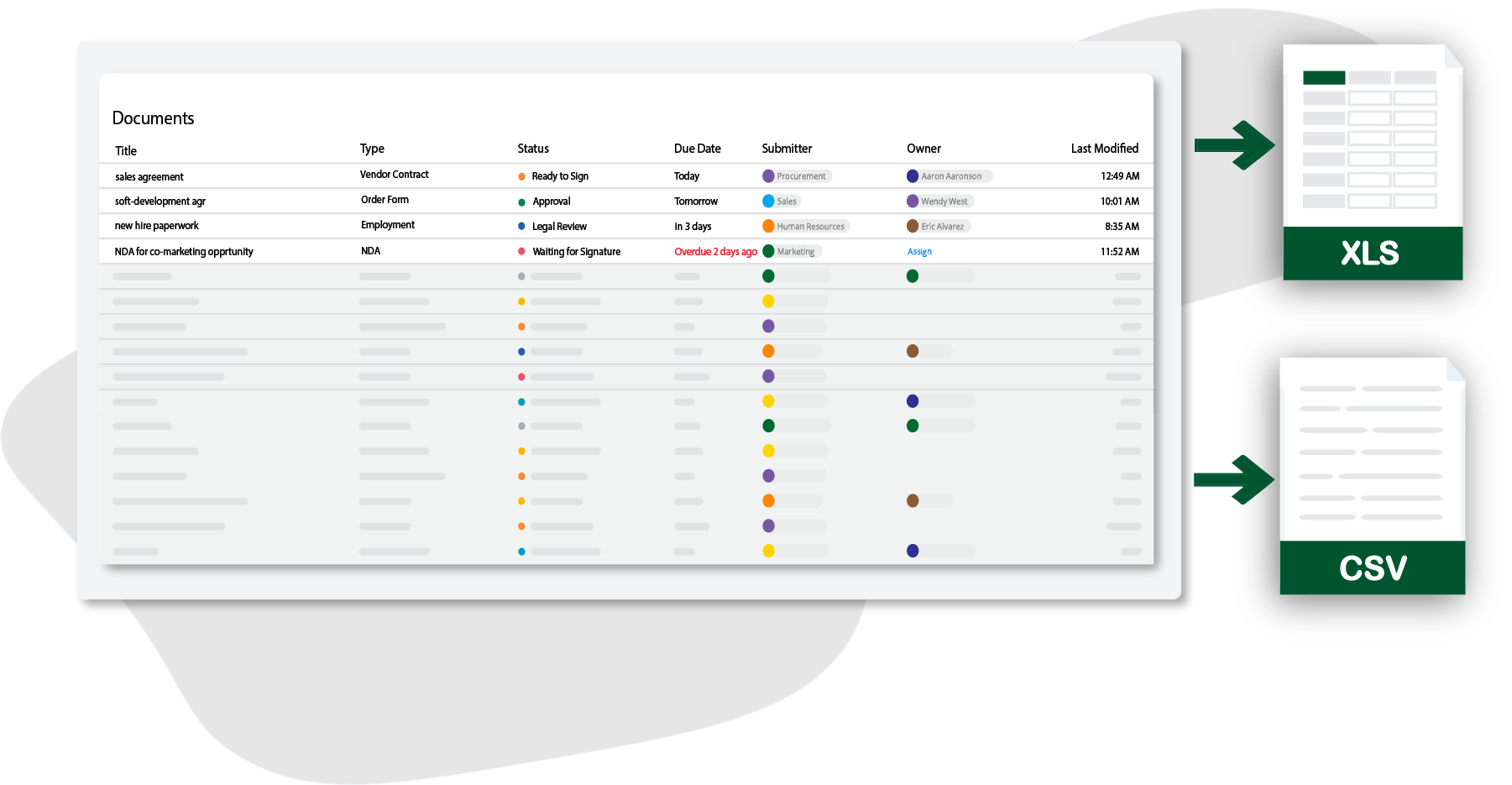

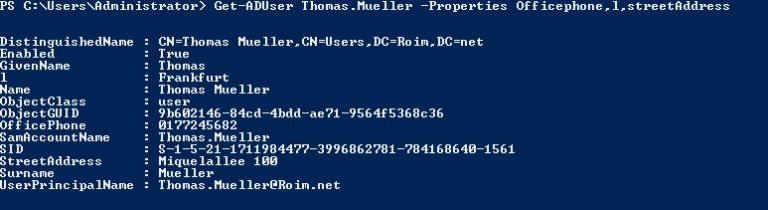

Updated on The PXF object store connectors support reading and writing Parquet-format data. Easy, right? While technically accurate, what data is it pointing to?I have found an efficient way to read parquet files into pandas dataframe in python, the code is as follows for anyone looking for an answer import azure.identity import pandas as pd import pyarrow.fs import pyarrowfs_adlgen2 handler=pyarrowfs_account_name('YOUR_ACCOUNT_NAME',()) fs = pyarrow.fs.PyFileSystem(handler) df = pd. Amazon describes getting AWS Athena running as “simply pointing to your data in Amazon S3, define the schema, and start querying using standard SQL”. Most cloud providers make everything seem really easy.If you plan on using parquet as the base of your organization’s data warehouse, additional features such as ACID guarantees and transaction logs are really beneficial.Thomas Spicer Read Python Scala Write Python ScalaFinally, Deltalake is an open-source “lakehouse” framework that combines the dynamic nature of a data lake with the structure of data warehouse. Options See the following Apache Spark reference articles for supported read and write options. It is a far more efficient file format than CSV or JSON. Apache Parquet is a columnar file format that provides optimizations to speed up queries. One drawback that it can get very fragmented. This link delta explains quite good how the files organized. Delta is storing the data as parquet, just has an additional layer over it with advanced features, providing history of events, (transaction log) and more flexibility on changing the content like, update, delete and merge capabilities. Sure, you can run a query using the portal or using Visual Studio or Visual Studio Code but all those tools will provide access to the generated file (which can be easily obtained or previewed)Main reason is that u-sql / data lake analytics is geared toward long running jobs (that can take up from a few minutes to hours) to process the vast …The data lake at SSENSE heavily relies on Parquet, which makes sense given that we deal with immutable data and analytics queries, for which columnar storage is optimal.32. Copy SELECT * FROM OPENROWSET (BULK ' FORMAT = 'PARQUET') AS This is a quick and easy way to read the content of the files without pre-configuration.When you load Parquet data from Cloud Storage, you … Parquet is an open source column-oriented data format that is widely used in the Apache Hadoop ecosystem. MSSparkUtils is the Swiss Army knife inside Synapse Spark Essential tips for exporting and cleaning data with Spark Check out End-to-end tutorials in Microsoft Fabric. I am reusing part of those 2 blogs scripts and adapting to the feature existing on Fabric. Something similar to the following works in Azure Synapse (note the long-term plan is to remove Synapse due to cost reasons): SELECT top 100 * FROM …Data must be exported in parallel and saved in parquet format. One thing I haven't been able to figure out is how to query my data lake from an Azure SQL database. I'm just finding my way around Azure, trying to build a modern data warehouse.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed